Six weeks into the semester, a student has missed three consecutive weeks of classes in two of her four courses. She has also stopped submitting assignments in a third. By any reasonable measure, she is at risk.

Your early alert system never flagged her. It was designed to, but it failed.

The system pulls enrolment status from the SIS, where her registration shows as full-time. But in the LMS, her credit load shows as part-time because one course is a co-op placement coded differently. The algorithm never triggered because the two systems use different definitions of what counts as enrolled. By the time an advisor notices, she has already submitted a withdrawal request.

Your institution's student success strategy included exactly this kind of early alert capability. The investment was real. It failed not because of the technology, but because of what sits underneath it: definitions that don't align, systems that don't share a common understanding of who a student is, and no one with the authority to resolve the conflict.

That's not a data problem. It's a strategy problem.

Institutions that get this right start from a simple belief: students deserve to be seen clearly by the systems built to support them. When definitions don't align and systems can't communicate, students don't receive early intervention. They reach for a withdrawal form. Building the architecture correctly is an act of institutional integrity, not a technology upgrade.

This guide maps what a functioning higher education data architecture actually requires. It explains why institutions consistently invest in the wrong elements and in the wrong order, and what it takes to build them in the right sequence. By the end, you'll have a diagnostic you can use before your institution makes its next platform decision.

The Architecture: What the Layers Actually Mean

Think of your institution's data environment as an iceberg.

Above the waterline is the consumption layer: reporting, analytics, dashboards, AI-enabled tools. This is what leadership sees in vendor demos. It's where the strategic value appears to live. And it's where most institutions direct the majority of their investment.

Below the waterline are the three layers that make the consumption layer possible. They're invisible in procurement conversations. They don't demo well. And they're the reason most analytics investments underdeliver.

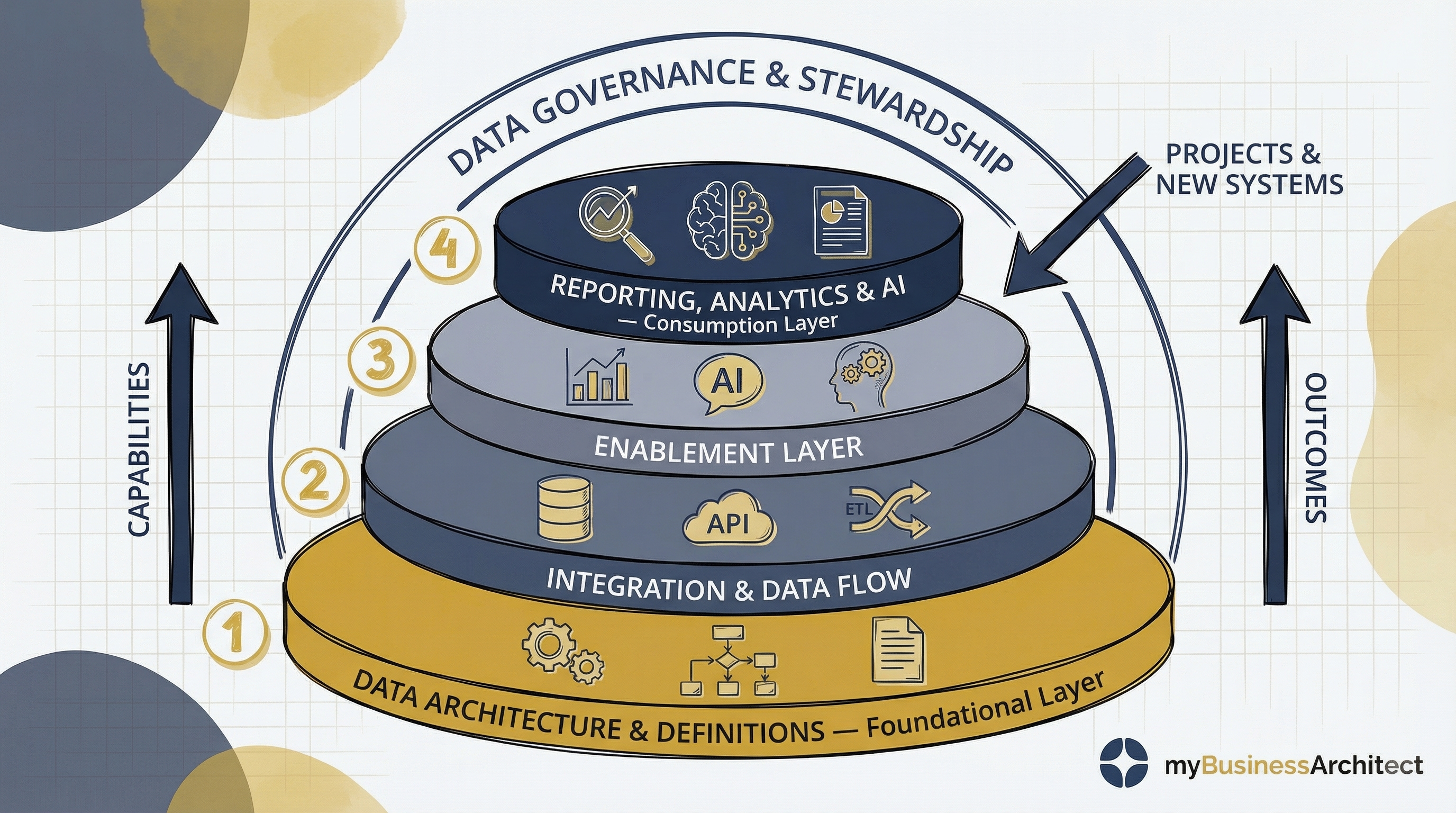

The higher education data architecture: built from the bottom up, governed continuously.

The higher education data architecture: built from the bottom up, governed continuously.

Here's what each layer contains, and the order to address them:

Data Architecture and Definitions (foundational layer)

This is where your institution establishes its core data model, identifies its systems of record, and defines the terms every other layer depends on. What counts as a student? A course completion? An employee? Without agreed definitions at this layer, every layer above it is building on shifting ground. Different systems use different definitions. Different reports produce different numbers. No analytics capability resolves that contradiction.

When this layer hasn't been resolved, you become a translator. Every report your team produces carries an unwritten footnote: these numbers reflect the SIS definition, which differs from the one finance uses. That footnote is not a data problem. It's an authority problem.

Integration and Data Flow

Once the institution has agreed on common definitions and authoritative sources, it can improve how data moves between systems. This layer covers APIs, middleware, ETL processes, and integration platforms. The goal isn't just to connect systems. It's to replace fragile point-to-point interfaces and manual workarounds with consistent, reusable integration patterns that carry the agreed definitions with them.

The Enablement Layer

This is where data services become possible: enterprise reporting, dashboards, analytics, forecasting, and AI-ready datasets. The enablement layer works when data is trusted, connected, ready for consumption and accessible across domains. It fails when it's asked to enable services on top of definitions that don't agree and integrations that weren't built to a common model. When this layer fails, your analytics team becomes the institution's most expensive manual reconciliation service.

Reporting, Analytics, and AI (consumption layer)

This is where value becomes visible: better decisions, student success insights, reliable financial planning, operational clarity. But this layer only works well when the three layers beneath it are sound. Weak definitions or fragile integrations don't just create variance in the reports. They create variance in the decisions those reports inform.

The two arrows in the diagram tell the same story from opposite directions. Building this architecture is a progressive capability development process: it starts with foundational capabilities (data architecture, metadata management, governance structures) and expands upward into integration management, analytics, and eventually AI. Each layer builds on the one below it. The sequence can't be shortcut. As those capabilities mature, the outcomes become visible: reduced duplication, faster access to trusted information, more reliable integrations, improved reporting quality, and the headroom to pursue future innovation. The outcomes don't appear at the moment of investment. They appear as the capability stack matures from the bottom up.

Data Governance and Stewardship

The arch spanning the entire diagram signals something critical: governance isn't a finishing step and it isn't IT's job alone. It applies at every layer. Who owns this data? Who approves changes to definitions? Who resolves conflicts between systems? Who is accountable when a report is wrong? Those questions have to be answered at every layer, continuously, not once at the end of an implementation project.

Projects and New Systems

Every future technology initiative either reinforces or undermines this enterprise model. New systems, integrations, and projects that bypass agreed standards don't just create immediate problems. They compound the foundation issues already there. Governance has to extend to procurement decisions, not just to existing systems.

Why Institutions Build It Backward

The problem isn't that higher education leaders don't understand the architecture. Most senior IT and data leaders can describe the four layers accurately. The problem is that investment decisions get made at a different altitude than architecture decisions.

Here's what the iceberg looks like from a leadership meeting.

The vendor demo shows you the consumption layer. Predictive enrolment models, student success dashboards, and AI-enabled advising alerts. The value is visible and compelling and it's above the waterline. The presentation runs forty-five minutes, but nobody demonstrates what happens when three upstream systems disagree about who counts as enrolled and the algorithm has to choose. Because this is out of sight. It's below the waterline.

So the analytics platform gets funded. The foundation work doesn't, because it's invisible in the room where the decision gets made.

That demonstration that skips the foundational layer isn't an oversight. It's a sales strategy. A vendor who shows you the consumption layer first doesn't benefit from you asking what's underneath it. An institution with a trusted data foundation is a harder buyer, as it knows which vendor claims to stress-test and which questions to ask before the contract is signed.

The consequences are predictable. The consumption layer surfaces the fragmentation underneath it. Reports produce conflicting numbers. Dashboards require manual validation before anyone trusts them. The BI team's job shifts from analysis to reconciliation. The early alert system fires, or doesn't fire, based on whatever the SIS and LMS happen to agree on that week.

The investment was real, but the sequencing was wrong. And the sequencing was wrong because the procurement conversation only covered what was above the waterline.

This is why data architecture is a strategy problem, not a technology problem. The investment decisions are made based only on what's visible. The work that determines whether those investments succeed is below the surface.

What the Design4 Framework Reveals

The Design4 framework (Discover, Define, Develop, Deliver) is a continuous adaptive cycle used in business architecture to diagnose where strategy execution breaks down. Applied to a higher education data environment, it shows exactly where the sequencing failure happens and what to do instead.

The Design4 Framework applied to Data Architecture

The Design4 Framework applied to Data Architecture

Discover: What decisions does this data need to support?

Most data strategies begin with an inventory: what data do we have, what systems hold it, where are the gaps? That's useful. It's not the right starting point.

The Discover phase asks a prior question: who does this institution exist to serve, and what do they need us to know in order to serve them well? Which decisions most depend on trusted data: enrolment planning, student retention, financial sustainability, programme viability? If you can't answer those questions before designing the architecture, you don't know what "trusted data" means for your institution. You know what the vendor's use cases are.

The student success early alert failure in the opening isn't a technology gap. It's a Discover failure. The institution never established which student outcomes the data environment existed to support, or in whose terms success would be measured. The Discover phase is complete when the institution has named the decisions that matter most and agreed on what "trusted data" means for each of them. Before the first vendor is in the room.

Define: The strategic choice your institution is avoiding

"What is a student?" sounds like a data architecture question. It isn't. It's a strategic choice, and the reason institutions avoid making it explicitly is that every domain already has an answer that works for them.

Your registrar's definition serves her accountability: active registrations. Finance's definition serves its accountability: paid tuition accounts. The LMS tracks instructional engagement. Each of those definitions is defensible within its domain. The problem is that enterprise reporting needs one authoritative answer, and choosing it means one domain's definition becomes the standard. Which means others must give up something that's currently serving them.

That's the choice no one wants to make in a meeting room. It's also the choice that, once made, unlocks every layer above it.

Two distinct problems can look identical here. Governance work, including naming a data steward, designating a system of record, and documenting a conflict resolution process, is real and necessary. But governance work answers who owns the definition. It doesn't answer what the concept of a semester means and how it differs from the concept of a term. An institution can assign the registrar as the authoritative owner of "student" and still have no agreed answer to whether a co-op placement counts, or whether a student on a leave of absence is enrolled for retention analytics. Resolving that requires a different conversation: not who decides, but what conditions make someone a student, for which purpose, and whether those conditions can differ across reporting uses. Both conversations are necessary. Neither substitutes for the other.

The Define phase is complete when there's a documented data stewardship model: named stewards for each critical data domain, the authoritative system of record for each, and a written conflict resolution process approved by the business owners, not by IT.

Develop: Why the wrong answer arrives faster

The integration and enablement layers represent organisational capability, not just technology. Integrations that move data between systems without a shared model create speed without accuracy. The wrong answer arrives faster.

The Develop question is whether your institution has built the capability to move trusted data consistently, not just data. That requires metadata management, data stewardship roles, and agreed standards for how new systems connect to the enterprise model. It requires that when a new system gets procured, the first question isn't "can we integrate it?" but rather "does it respect our definitions, and who is accountable if it doesn't?"

Most institutions build integrations. Fewer build the capability to maintain them coherently over time as systems change, vendors update their APIs, and new platforms are added. The difference shows up in how much your BI team spends on reconciliation versus analysis.

The Develop phase is complete when the integration layer is built to a common model, metadata is documented for each critical data element, and the procurement standard requires any new system to conform to the enterprise data model before a contract is signed.

Deliver: Is the data changing what people decide?

The Deliver phase asks the question most institutions skip. Not "is the data reaching the right people?" but rather "is what we're learning changing what we decide to do next?"

Analytics that confirm your existing assumptions aren't closing the strategy-execution gap. They're decorating it. The feedback loop from operations back to strategy requires asking whether your data environment is surfacing evidence that challenges your strategic priorities, not just evidence that validates them.

If your enrolment planning cycle looks roughly the same regardless of what last year's data showed, if your student success initiatives aren't revised based on what the retention data revealed, your consumption layer isn't delivering in the sense that matters. It is reporting. It is not governing.

The Deliver phase is working when operational data is changing strategic decisions, not just confirming them. And when a student like the one in the opening gets a call from an advisor before she submits a withdrawal request.

What It Looks Like When It's Working

A mid-sized regional university with federated affiliated institutions had a familiar problem: a decade of point-to-point integrations, four different definitions of "student" across its SIS, LMS, CRM, and financial system, and reporting obligations spanning multi-lingual institutional requirements. Its BI team spent the majority of its time producing reconciliation notes alongside every report it published.

The institution was also navigating significant financial pressure. That pressure made the data problem more urgent: decisions about programme viability, enrolment projections, and resource allocation were being made on numbers that different offices couldn't agree on. Leadership needed to trust the data at exactly the moment the data was least trustworthy.

Leadership wanted a new analytics platform. The CIO made a different recommendation: before the analytics investment, a six-month foundation phase with a single deliverable. One authoritative definition of "student" for each of the institution's core reporting purposes, approved by a governance committee with representatives from the registrar, finance, academic affairs, and the affiliated institution leads, and with a named data steward accountable for each definition.

It wasn't a popular recommendation. The governance committee met nine times. By the fifth meeting, the process nearly collapsed. The registrar's office proposed using active SIS registration as the authoritative definition of enrolled student. The finance office refused: their revenue recognition obligations required a paid-fee trigger, and ratifying the registrar's definition would mean their own downstream reporting would need to be rebuilt. Neither office was wrong. Each definition served its accountability domain accurately. That's precisely the problem.

The VP's decision didn't eliminate the tension. It named it, documented it, and gave the integration layer something it could build to: SIS registration as the authoritative definition for operational and student success reporting, paid-fee status as a required flag for financial reporting, and a named data steward accountable for maintaining the distinction. The affiliated institutions were required to conform to the same model within eighteen months.

That decision unlocked everything above it.

Once the definitions were authoritative, the integration team could build to a common model instead of mapping between competing ones. The reconciliation notes disappeared from BI reports. The analytics platform, when it was eventually procured, had trustworthy data to surface.

The governance work wasn't glamorous. It didn't produce a dashboard anyone celebrated at a board meeting. But it's the reason the analytics investment delivered on what the vendor had demonstrated.

The CIO who recommended the six-month foundation phase wasn't popular in the short term. She had a belief about what her job was: not to give leadership the platform they wanted, but to make sure the platform they got could do what they were paying for. She was the person in the room who believed the work that didn't demo well was the work that mattered. The governance committee's nine meetings weren't an obstacle to the strategy. They were the strategy.

The institution invested in the layers below the waterline first. The early alert system, rebuilt on a shared enrolment definition, began doing what it was built to do: reaching students before they submitted a withdrawal request.

Four Questions to Ask Before the Next Platform Investment

Before your next platform decision gets made, someone in that room should ask four questions. Not about the platform. About what's underneath it.

The Four Ares are the feedback questions that close the Design4 cycle: Are we doing the right things? Are we doing them the right way? Are we getting them done well? Are we getting the benefits? The first two belong in the Discover and Define phases, before a platform decision is made. The second two belong in the Develop and Deliver phases, while the work is underway. Applied to a higher education data environment, they become a procurement discipline. Ask them before the contract is signed, not after the platform is live. They won't make you popular. They will make you right.

Are we governing the right data decisions?

Are the definitions that matter most to institutional reporting (student, employee, course, programme, credential) documented, authoritative, and agreed across the systems that use them? Or is each system still maintaining its own version?

Are we governing them the right way?

Is there a named person with genuine authority to resolve conflicts between systems and definitions? Is that person in IT or in the business? (It should be in the business, with IT as the implementer of the decision.) Is there a process, not just an intention, for how new definitions get approved and existing ones get updated?

Are we getting the governance work done?

Is the integration layer being maintained as systems change, or is it degrading silently? Does the team building new integrations know which system is the authoritative source for each data element? Are new procurements being reviewed against the enterprise data model before contracts are signed?

Are we getting the benefits?

Is the BI team spending more time on analysis than on reconciliation? Are the decisions that depend on enrolment data, retention data, and financial data being made with confidence, or with a caveat that the numbers are approximate? Is the consumption layer changing what leaders decide, or confirming what they already believed?

If any of these questions produces an uncomfortable silence, that's the layer that needs attention before the next platform decision gets made.

From Diagnosis to Discipline

This page gives you the framework to diagnose which layer is broken and why the investment pattern keeps producing the same result. What it can't give you is the applied practice: the discipline of running the cycle continuously, governing the definitions as the institution changes, and keeping new projects aligned to the enterprise model rather than bypassing it.

That's what the course is for.

Closing the Strategy-Execution Gap (COR-BA-100) follows one institution through all four phases of the Design4 cycle, including the governance decisions that must be made before an architecture can hold. It includes an AI coach and a First Cycle Blueprint built on your institution's specific situation, not a training scenario. The diagnosis is in this page. The discipline is in the course.

Frequently Asked Questions

We don't have a data governance structure yet. Where do we actually start?

Start with the decision causing the most pain. Find the report that generates the most conflict in leadership meetings, identify the definition underneath it that different systems don't agree on, and convene the business owners of those systems to make one authoritative decision. Don't start with a governance framework. Start with one resolved definition. The framework follows the practice, not the other way around.

Who should own the data definitions: IT or the business units?

The business units own the definitions. IT implements and enforces them. When IT owns the definitions, the decisions get made by the people with the least accountability for what the definitions produce. The registrar's office is accountable for enrolment accuracy. Finance is accountable for revenue reporting. Those are the people who need to own the definitions, because they're the ones who live with the consequences when the definitions are wrong.

How do we make the case for foundation work when leadership wants a dashboard in six months?

Use the cost of the status quo. How many hours per week does your BI team spend on reconciliation rather than analysis? How many leadership decisions have been deferred or made with caveats because the data couldn't be trusted? What did the last analytics platform cost, and what did it deliver? Foundation work doesn't compete with the analytics investment. It determines whether the analytics investment pays off.

What do we do about the point-to-point integrations we've already built?

You don't replace them all at once. Map which integrations carry definitions that conflict with your authoritative model and prioritise by impact. Which ones are feeding the systems that produce your highest-stakes reports? Rationalise those first. The rest can follow a planned migration as the enterprise integration layer matures.

How do we prevent new system procurements from recreating silos?

Make data architecture review a formal part of the procurement process. Before a contract is signed, require vendors to demonstrate how their system will conform to your institution's data definitions and integration standards. This isn't a technical hurdle. It's a governance requirement. Institutions that embed this into procurement find that architecture degradation slows significantly within two or three procurement cycles.

What Trustworthy Data Makes Possible

The institutions that get this right don't look like better technology buyers afterward. They look like institutions where a student who's missing class gets a call before she submits a withdrawal request.

That's what trustworthy data produces. Not better dashboards. Decisions that reach the people the institution exists to serve.

The leaders who build that environment start from a conviction: that the unglamorous work (the governance meetings, the definition disputes, the sequencing decisions nobody celebrates) is the work that matters. That conviction is what makes the platforms work.

That's not a data capability. That's who you are when you decide a student deserves a call before she submits a withdrawal request.